Query by Video: Cross-modal Music Retrieval

This project is in collaboration with Spotify, mentored by Aparna Kumar. |

|

Publication

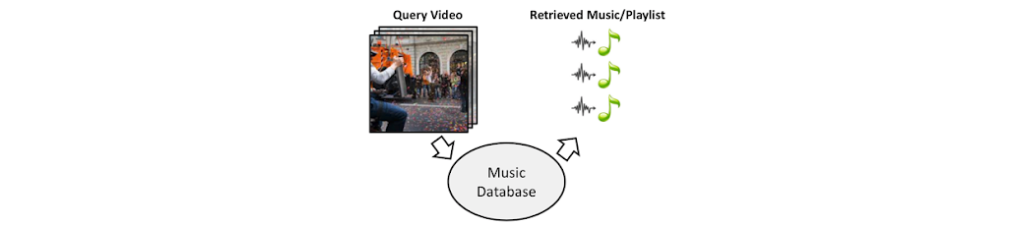

Problem Statement

- Input: A short video clip.

- Ouptut: A list of retrieved song from an existing music database.

Method

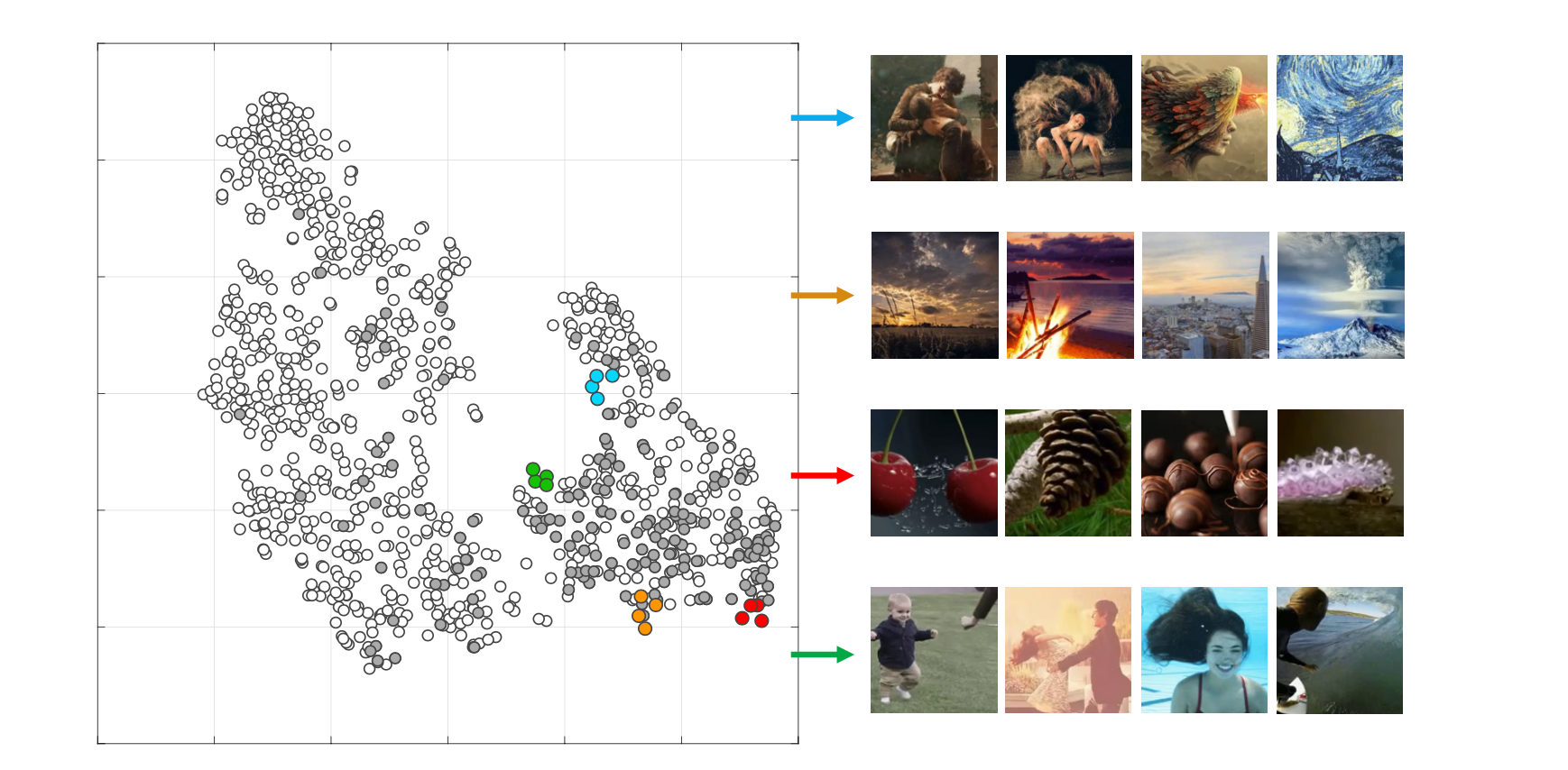

The t-SNE visualization of the latent emotion space. Four randomly selected regions are presented in colors representing different emotion concepts: gloomy, ambient, delicate, sweet, each with the thumbnails of the paired videos displayed.

Demo Results

Demo 1

The demo videos present the input silent video with the music track retrieved by the proposed model.

- Query videos are from: The test split of Cowen2017 dataset .

- Musc Database contains: The test split of AudioSet Music Mood Subset (354 music excerpts).

Note

- Each Youtube link includes 30 query videos, each one is presented with the 5 top retrieved music excerpts.

- The rank and cross-modal distance is displayed.

- Query videos have various durations, but all music has 10 seconds. So some video frames will end earlier than audio track.

Demo 2

The demo videos present the input silent video with the music track retrieved by the proposed model.

- Query videos are from: The Instagram top video posts.

- Musc Database contains: Spotify popular genres (1195 music excerpts).

Note

- For each video, only the top retrieved music is presented.